Today we are going to learn how to migrate users into Drupal. The example code will be explained in two blog posts. In this one, we cover the migration of email, timezone, username, password, and status. In the next one, we will cover creation date, roles, and profile pictures. Several techniques will be implemented to ensure that the migrated data is valid. For example, making sure that usernames are not duplicated.

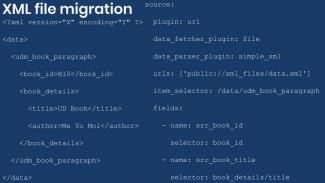

Although the example is standalone, we will build on many of the concepts that had already been covered in the series. For instance, a file migration is included to import images used as profile pictures. This topic has been explained in detail in a previous post, and the example code is pretty similar. Therefore, no explanation is provided about the file migration to keep the focus on the user migration. Feel free to read other posts in the series if you need a refresher.

Getting the code

You can get the full code example at https://github.com/dinarcon/ud_migrations The module to enable is UD users whose machine name is ud_migrations_users. The two migrations to execute are udm_user_pictures and udm_users. Notice that both migrations belong to the same module. Refer to this article to learn where the module should be placed.

The example assumes Drupal was installed using the standard installation profile. Particularly, we depend on a Picture (user_picture) image field attached to the user entity. The word in parenthesis represents the machine name of the image field.

The explanation below is only for the user migration. It depends on a file migration to get the profile pictures. One motivation to have two migrations is for the images to be deleted if the file migration is rolled back. Note that other techniques exist for migrating images without having to create a separate migration. We have covered two of them in the articles about subfields and constants and pseudofields.

Understanding the source

It is very important to understand the format of your source data. This will guide the transformation process required to produce the expected destination format. For this example, it is assumed that the legacy system from which users are being imported did not have unique usernames. Emails were used to uniquely identify users, but that is not desired in the new Drupal site. Instead, a username will be created from a public_name source column. Special measures will be taken to prevent duplication as Drupal usernames must be unique. Two more things to consider. First, source passwords are provided in plain text (never do this!). Second, some elements might be missing in the source like roles and profile picture. The following snippet shows a sample record for the source section:

source:

plugin: embedded_data

data_rows:

- legacy_id: 101

public_name: 'Michele'

user_email: 'micky@example.com'

timezone: 'America/New_York'

user_password: 'totally insecure password 1'

user_status: 'active'

member_since: 'January 1, 2011'

user_roles: 'forum moderator, forum admin'

user_photo: 'P01'

ids:

legacy_id:

type: integerConfiguring the destination and dependencies

The destination section specifies that user is the target entity. When that is the case, you can set an optional md5_passwords configuration. If it is set to true, the system will take an MD5 hashed password and convert it to the encryption algorithm that Drupal uses. For more information password migrations refer to these articles for basic and advanced use cases. To migrate the profile pictures, a separate migration is created. The dependency of user on file is added explicitly. Refer to these articles more information on migrating images and files and setting dependencies. The following code snippet shows how the destination and dependencies are set:

destination:

plugin: 'entity:user'

md5_passwords: true

migration_dependencies:

required:

- udm_user_pictures

optional: []Processing the fields

The interesting part of a user migration is the field mapping. The specific transformation will depend on your source, but some arguably complex cases will be addressed in the example. Let’s start with the basics: verbatim copies from source to destination. The following snippet shows three mappings:

mail: user_email

init: user_email

timezone: user_timezoneThe mail, init, and timezone entity properties are copied directly from the source. Both mail and init are email addresses. The difference is that mail stores the current email, while init stores the one used when the account was first created. The former might change if the user updates its profile, while the latter will never change. The timezone needs to be a string taken from a specific set of values. Refer to this page for a list of supported timezones.

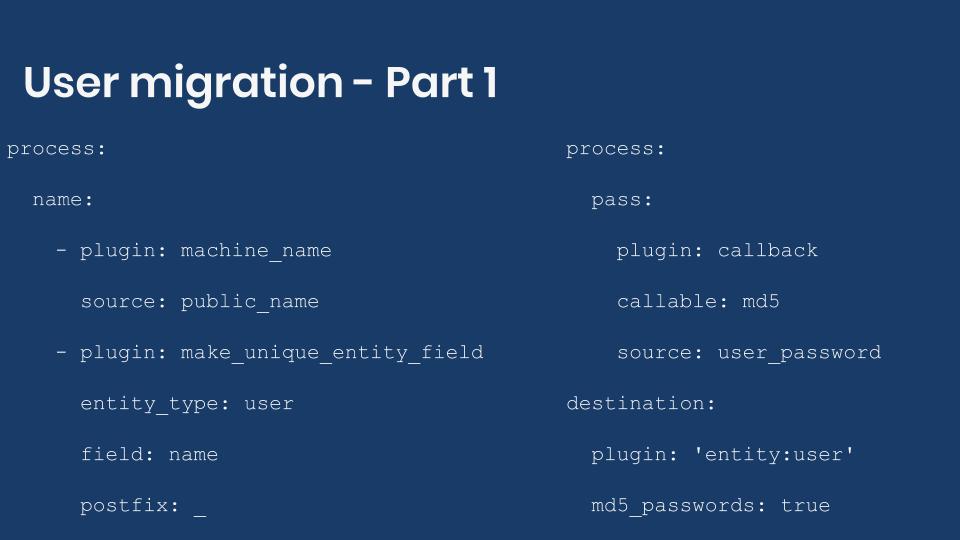

name:

- plugin: machine_name

source: public_name

- plugin: make_unique_entity_field

entity_type: user

field: name

postfix: _The name, entity property stores the username. This has to be unique in the system. If the source data contained a unique value for each record, it could be used to set the username. None of the unique source columns (eg., legacy_id) is suitable to be used as username. Therefore, extra processing is needed. The machine_name plugin converts the public_name source column into transliterated string with some restrictions: any character that is not a number or letter will be converted to an underscore. The transformed value is sent to the make_unique_entity_field. This plugin makes sure its input value is not repeated in the whole system for a particular entity field. In this example, the username will be unique. The plugin is configured indicating which entity type and field (property) you want to check. If an equal value already exists, a new one is created appending what you define as postfix plus a number. In this example, there are two records with public_name set to Benjamin. Eventually, the usernames produced by running the process plugins chain will be: benjamin and benjamin_1.

process:

pass:

plugin: callback

callable: md5

source: user_password

destination:

plugin: 'entity:user'

md5_passwords: trueThe pass, entity property stores the user’s password. In this example, the source provides the passwords in plain text. Needless to say, that is a terrible idea. But let’s work with it for now. Drupal uses portable PHP password hashes implemented by PhpassHashedPassword. Understanding the details of how Drupal converts one algorithm to another will be left as an exercise for the curious reader. In this example, we are going to take advantage of a feature provided by the migrate API to automatically convert MD5 hashes to the algorithm used by Drupal. The callback plugin is configured to use the md5 PHP function to convert the plain text password into a hashed version. The last part of the puzzle is set, in the process section, the md5_passwords configuration to true. This will take care of converting the already md5-hashed password to the value expected by Drupal.

Note: MD5-hash passwords are insecure. In the example, the password is encrypted with MD5 as an intermediate step only. Drupal uses other algorithms to store passwords securely.

status:

plugin: static_map

source: user_status

map:

inactive: 0

active: 1The status, entity property stores whether a user is active or blocked from the system. The source user_status values are strings, but Drupal stores this data as a boolean. A value of zero (0) indicates that the user is blocked while a value of one (1) indicates that it is active. The static_map plugin is used to manually map the values from source to destination. This plugin expects a map configuration containing an array of key-value mappings. The value from the source is on the left. The value expected by Drupal is on the right.

Technical note: Booleans are true or false values. Even though Drupal treats the status property as a boolean, it is internally stored as a tiny int in the database. That is why the numbers zero or one are used in the example. For this particular case, using a number or a boolean value on the right side of the mapping produces the same result.

In the next blog post, we will continue with the user migration. Particularly, we will explain how to migrate the user creation time, roles, and profile pictures.

What did you learn in today’s blog post? Have you migrated user passwords before, either in plain text or hashed? Did you know how to prevent duplicates for values that need to be unique in the system? Were you aware of the plugin that allows you to manually map values from source to destination? Please share your answers in the comments. Also, I would be grateful if you shared this blog post with others.

Next: Migrating users into Drupal - Part 2

This blog post series, cross-posted at UnderstandDrupal.com as well as here on Agaric.coop, is made possible thanks to these generous sponsors. Contact Understand Drupal if your organization would like to support this documentation project, whether it is the migration series or other topics.

Comments

Add new comment